why your Deep Network isn't learning?

gradient flow story!

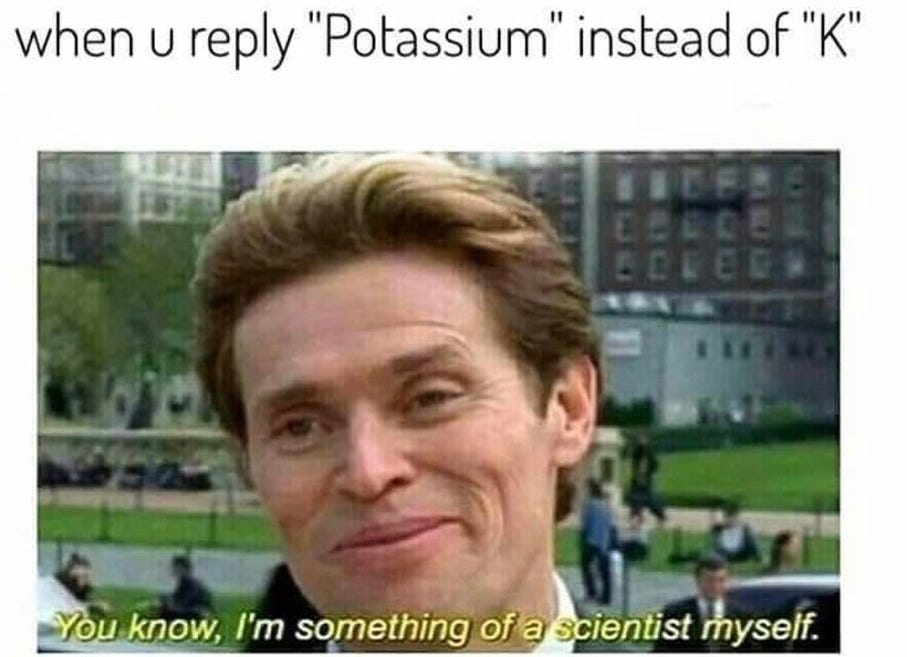

For those who just want to sound smart

Deep networks once refused to learn because gradients either vanished or exploded while traveling backward through many layers. This happened mainly due to poor initialization and activation functions like sigmoid and tanh, whose derivatives saturate near zero.

The Mystery

Backpropagation itself was never wrong. It uses the chain rule to compute how each weight affects the loss and tells us in which direction weights should move.

Shallow networks worked fine with random initialization because gradients passed through only a few layers. The signal was not multiplied many times, so it stayed usable.

But when networks became deep, something strange happened. Adding more layers made performance worse.

People in the 1990s concluded that deep neural networks were not worth pursuing.

The Core Problem

Backpropagation multiplies derivatives across layers:

g = ∏ f'(x_i)

If each derivative is slightly less than 1, the gradient shrinks layer by layer. If it is slightly greater than 1, the gradient explodes. Deep chains amplify both effects.

Depth turned learning into a communication problem.

Intuition

Imagine passing a message through a long line of people.

If each person whispers a little quieter, the message disappears. If each person shouts louder, the message becomes noise.

This is exactly what happens to gradients in deep networks.

You can also think of it like the Doppler effect. As a sound source moves farther away, the signal becomes weaker and distorted. In deep networks, the learning signal becomes weaker or unstable as it travels through many layers.

When gradients vanish, early layers stay almost random. Not because their features are useless, but because the learning signal cannot reach them.

Why Sigmoid Made It Worse

Sigmoid and tanh saturate for very large or very small inputs. In those regions, their derivatives become almost zero.

When a gradient passes through such a neuron, it is almost completely blocked. This makes vanishing gradients even worse.

The neuron is not inactive. It is insensitive. It cannot respond to error signals anymore.

What Actually Fixed It

Several ideas together made deep learning possible:

ReLU: Its derivative is 1 for positive inputs. This removes guaranteed gradient shrinking that sigmoid had.

Better initialization (Xavier, He): Weights are scaled so signal variance stays stable across layers.

Batch Normalization: Keeps activations in a healthy range so neurons do not saturate.

Residual connections: Provide a direct path for information and gradients.

Among these, residual connections changed everything.

Residual Intuition

Instead of

y = f(x)

we use

y = x + f(x)

During backpropagation:

dy/dx = 1 + f'(x)

Even if f’(x) is small, the gradient still has a path with value 1.

This does not make a feature important. It only allows the network to judge whether it is important.

The Deep Insight

Deep learning works not because networks became deeper, but because we learned how to protect gradients.

Modern Perspective

ResNets and Transformers both rely heavily on skip connections. Every transformer block contains multiple residual paths.

Models like ChatGPT would not train without this realization.

Depth works only with gradient highways.

Closing

If your deep model is not learning, the problem is rarely depth itself. It is almost always gradient flow.